Graphical Attack Vectors in Phishing: Is Cyber Security Keeping Up?

Is your anti-phishing technology primed for graphical cyber attack vectors?

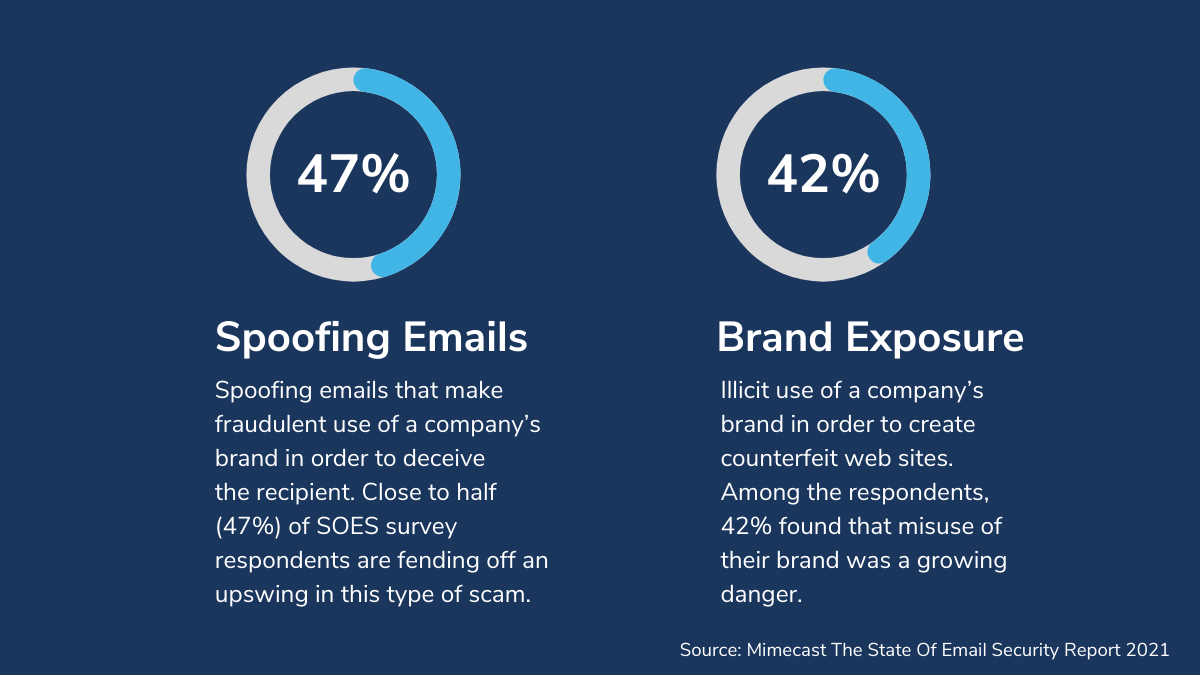

83% of cyber security professionals who responded to a recent poll by VISUA said that they believe their anti-phishing platform is capable of detecting graphical attack vectors. That’s interesting given that a similar percentage also said that they had experienced attacks using these graphical evasion techniques. Meanwhile, 47% of respondents to a recent survey carried out by Mimecast, said that they had seen an increase in attacks that spoofed a company’s brand in order to deceive, and 42% of companies felt that misuse of their brand was a growing danger. That tells us there is a gap in the technology being deployed, and quite a significant one.

So, we have to ask, are the majority of phishing detection platforms really capable of detecting and blocking phishing attacks that weaponise graphics, and if not, why not?

Keeping up with the bad actors

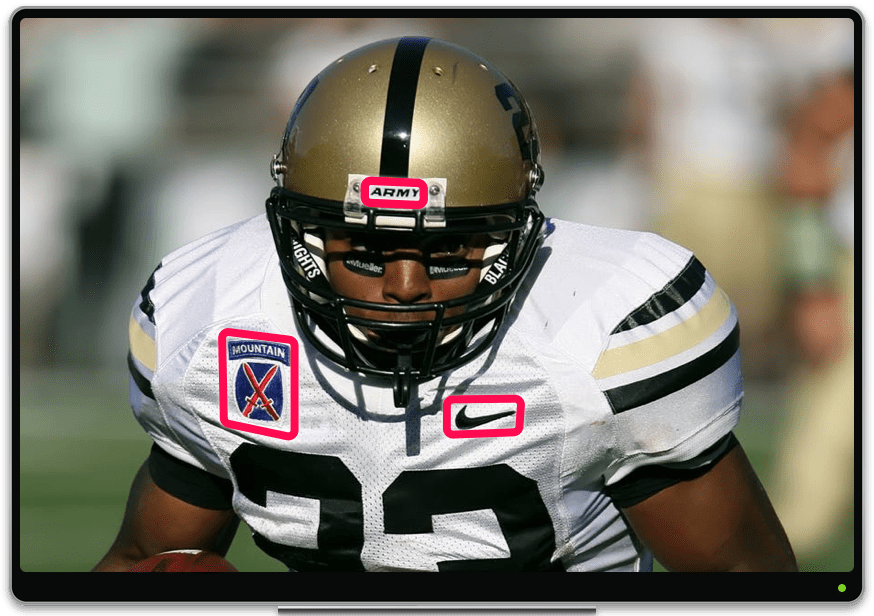

Cyber security professionals are in a constant arms race against bad actors. It can be difficult to stay one step ahead and not for want of trying. We’ve highlighted before that a large number of phishing attacks use visual markers, such as logos and authority icons and marks. Meanwhile, they are getting ever more ingenious with graphical obfuscation and evasion techniques, such as converting text and even forms to graphics, and adding visual noise, like fragmenting key graphics to make it impossible to detect using traditional programmatic analysis, and even with typical computer vision. Cyber security companies therefore need to think differently to not only keep up, but surpass the bad actors’ efforts.

AI-Powered Cyber Attacks

It’s not just the good guys using AI to detect and block threats. Bad actors are increasingly using AI to power ever more sophisticated attacks in higher volumes. They are on the rise because they have proven to be so effective. At the vanguard of these attacks are email-based phishing. In fact, 91% of successful data breaches and 95% of all enterprise network breaches start as a phishing email. But before the phishing attack is the social engineering campaign, which can use multiple channels to gather data. It’s in both these stages that graphics can be used to confuse, manipulate and evade.

Graphical Attack Vectors give these AI-powered attacks an extra degree of effectiveness and success; making phishing attempts more convincing to the human eye, and tricking phishing detection systems into thinking that they are legitimate. By exploiting visual elements that we have become accustomed to seeing in business emails and on websites, and using graphics to hide elements that they don’t want the detection systems to see, their phishing email can slip through the net.

Why Traditional Computer Vision Methodology Is Not The Answer

Given the growth of attacks exploiting graphical elements, the logical next step would be to integrate computer vision into your phishing detection tech stack. Indeed, that’s what a number of cyber security companies have done. Unfortunately, there are specific challenges to adding logo detection and other computer vision elements into the workflow.

Like any AI system, computer vision traditionally requires a lot of training to recognise something accurately. Take the PayPal logo for instance. In most cases, you’d need to feed the system 100+ examples of the logo in various uses and positions. Having built the model, the system will do a really good job of recognising that logo, but it can all fall apart pretty quickly when a few factors are considered:

1. Noise

If bad actors know you’re looking for something specific, just break it into multiple small parts – each part means nothing until it’s put back together at render

2. Modification

If bad actors know that you are looking for a specific logo or visual element and they know how Visual-AI works, they can simply use a different version that possibly hasn’t been trained, like an outdated version of a company logo, or even add some perspective or perhaps place the logo on a ‘noisy’ visual background.

3. Switch Elements

There are multiple computer vision technologies and they need to work in concert to be effective. From logo detection to text detection/OCR, object detection and visual search, if a phishing detection platform is not using all these technologies they won’t be able to see when a bad actor converts text into an image, or a form into an image, or for that matter an entire email!

4. Narrow Expertise

Cyber security companies are filled to the brim with very, very smart people. But if we’re honest, their expertise is in the discovery and blocking of cyber threats. It is not in the development and application of a niche and very complex aspect of AI called Visual-AI.

5. Scale, Time, and Cost

Using readily available computer vision technologies, it’s really easy to build a very compelling visual detection system that will run perfectly at low volumes. The problems come when you try to scale that to processing hundreds of millions of detections per day. Unless you have an intimate knowledge of computer vision systems you will have challenges scaling the solution and integrating it into your detection workflow so it can run cost-effectively and without adding significant latency to the process.

6.Model Safety

Bad actors know that AI models can be manipulated. The technical term is ‘model poisoning’, and computer vision models, unless properly implemented and protected, can fall victim to this attack method. A poisoned model will misclassify a visual element, allowing a threat to pass through – a wolf in sheep’s clothing, if you will.

Taking care of all this in-house is extremely challenging. Not impossible, but means you have to scale up a computer vision expert team quickly, while avoiding these pitfalls. And even if you do that, you can’t possibly innovate at the rate that a dedicated Visual-AI company can. Working with the right computer vision partner will ensure that all these elements are covered and don’t become issues. For instance, VISUA’s Adaptive Learning Engine delivers a function called ‘Instant Learning’. This allows users to begin detecting a new logo, or other visual mark or element, simply by uploading a single example of it!

A New Approach In Visual-AI

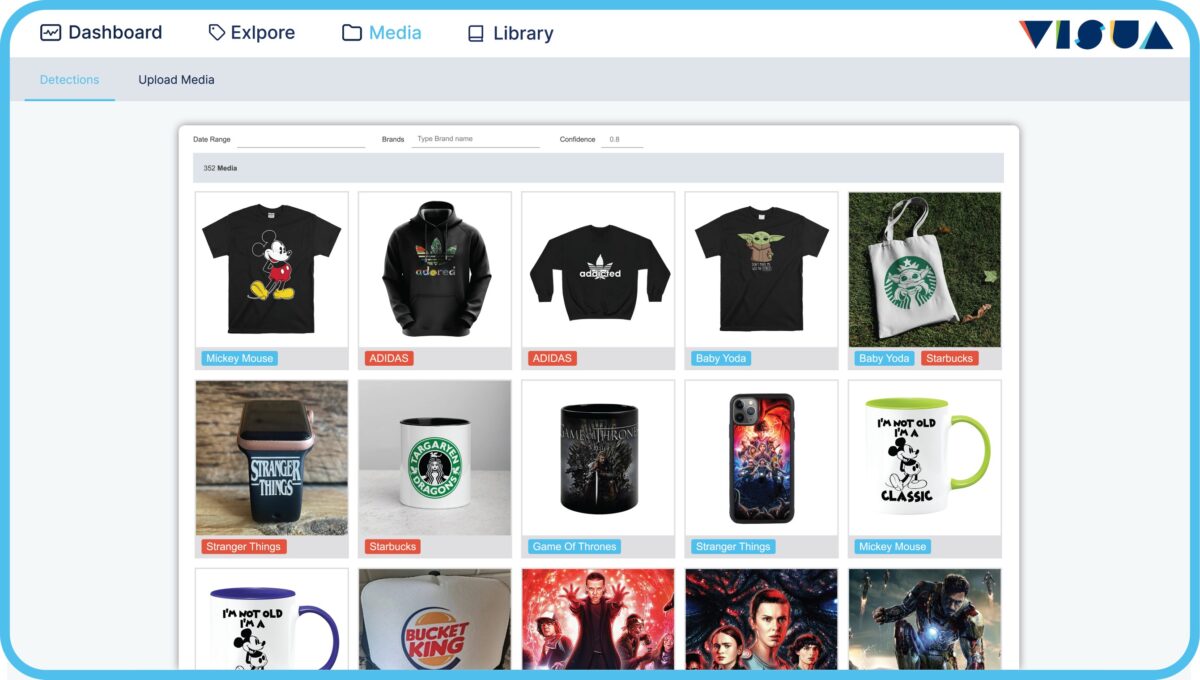

At VISUA we’re not cybersecurity experts, but we are Visual-AI/Computer Vision experts. So, when we were approached by a leading phishing detection platform to investigate how we could collaborate to help them better detect graphical attack vectors, we had an epiphany.

As our CTO and Co-Founder, Alessandro Prest, put it, “For all their ingenious obfuscation and evasion techniques, there comes a time when bad actors need to show their hand. In the users email app or browser, the email or page must be fully rendered – no more tricks or gimmicks. We realised that if the phishing detection platform synthesised that experience in a sandbox and captured the screen at that point, we would be able to analyse every element and flag anything visual that could be used as a threat. Words, buttons, logos, forms, icons…anything can be identified, analysed and the phishing platform informed. This way, the bad actor has nowhere left to hide.”

So, how does this work in practice? It’s rather ingenious in its simplicity and works in a three-step process:

1. Rendering & Processing

The first step is to use a safe sandbox to fully render the email or webpage as a flattened image file. Once that’s done, using simple API commands, the image can be processed by our Visual-AI engine, either via the cloud or through an on-premise setup.

2. Flagging

The engine’s job is to process and analyse the render. By implementing logo/mark detection, text detection, visual search and object and scene detection, the engine flags any elements of the email or webpage that may cause a potential security threat.

3. Final Analysis

The third and final role of VISUA’s technology here, is to pass the visual risk signals back to the master phishing detection system to add to the overall threat score of the page. This combines with the analysis carried out by other facets of the security system to give a fuller and more accurate picture. What’s more is, this all runs in real time, so can truly run alongside the platform’s existing analysis framework.

Ultimately, it was our deep knowledge of Visual-AI that allowed us to think differently and propose an entirely new approach that has proven extremely effective. It’s especially effective because our visual signals are added to the programmatic signals allowing the phishing detection platform to make an even more accurate threat assessment.

So, let’s ask that question again…

There are already a number of cybersecurity companies implementing some form of computer vision technology. And there will come a time where Visual-AI will become another essential step in the phishing detection workflow. Quite frankly, that time can’t come soon enough. So we’ll ask again, is the technology you deploy really capable of detecting and blocking graphical attack vectors? If you’re not so sure now, why not reach out to us to test our software? On the other hand, if you’re one of the organisations that is seeing an increase in emails with graphical spoofing and evasion, speak to your platform provider and share this article.

Visit our website to learn more about Anti-phishing and Visual-AI

Book A DemoRELATED

Challenges of Developing Computer Vision for Cyber Security Posted in: Anti-Phishing, Cybersecurity - Reading Time: 3 minutesConsidering developing Computer Vision for Cyber Security in-house? There is a very good reason why companies in the phishing detection and threat […]

Challenges of Developing Computer Vision for Cyber Security Posted in: Anti-Phishing, Cybersecurity - Reading Time: 3 minutesConsidering developing Computer Vision for Cyber Security in-house? There is a very good reason why companies in the phishing detection and threat […] Did We Just Accidentally Revolutionize Phishing Detection? Posted in: Anti-Phishing, Brand Protection, Cybersecurity, Featured - Reading Time: 5 minutesVisual-AI is the missing piece of the puzzle when it comes to tackling Phishing By: Alessandro Prest, Chief Technology Officer, VISUA Some […]

Did We Just Accidentally Revolutionize Phishing Detection? Posted in: Anti-Phishing, Brand Protection, Cybersecurity, Featured - Reading Time: 5 minutesVisual-AI is the missing piece of the puzzle when it comes to tackling Phishing By: Alessandro Prest, Chief Technology Officer, VISUA Some […] APWG Phishing Trends Report: Year on Year Review (2018 to end 2021) Posted in: Anti-Phishing, Cybersecurity - Reading Time: 6 minutesA close examination of the APWG Phishing Trends Report 2018 to 2021 TLDR: The APWG Phishing Trends Report has, in recent years, […]

APWG Phishing Trends Report: Year on Year Review (2018 to end 2021) Posted in: Anti-Phishing, Cybersecurity - Reading Time: 6 minutesA close examination of the APWG Phishing Trends Report 2018 to 2021 TLDR: The APWG Phishing Trends Report has, in recent years, […]

VISUA & Vision Insights Partner To Usher In New Era In Sports Sponsorship Intelligence

Reading Time: 4 minutesIntegrated partnership sees Vision Insights integrate VISUA’s Sports Sponsorship Monitoring Computer Vision Suite into its new Decoder…

Featured Sponsorship Monitoring Technology VISUA NewsAre Website CMS, Email Marketing, and Survey Platforms Accountable For Their Part In The Phishing Epidemic?

Reading Time: 7 minutesTLDR: Phishing attacks have reached the highest levels ever seen. Bad Actors are abusing convenient and well-known…

Anti-Phishing CybersecurityVISUA Unveils Infringio: The AI-Powered Shield Against Copyright and Trademark Lawsuits

Reading Time: 4 minutesDublin and New York-based VISUA Leverages its market-leading Visual-AI technology suite within Infringio, to scan images for…

Featured Technology Trademark Protection VISUA News