Computer Vision and Content Moderation Applications

An examination of Computer Vision and Content Moderation Applications

From social media to messaging apps, gaming services to social marketplaces, trust and safety teams are scrambling to moderate visual content on their platforms. Why? Because the majority of user-generated content is now visual. 720,000 hours of video and more than 3 billion- yes billion, images are uploaded every single day and this visual content is notoriously difficult to moderate. If you’re responsible for maintaining the trust, safety, and compliance of users on your platform, you’ll know that the main problem is that there are simply not enough of you to handle the enormous volume of visual content. That’s why computer vision is so invaluable when it comes to content moderation.

Computer Vision Technology

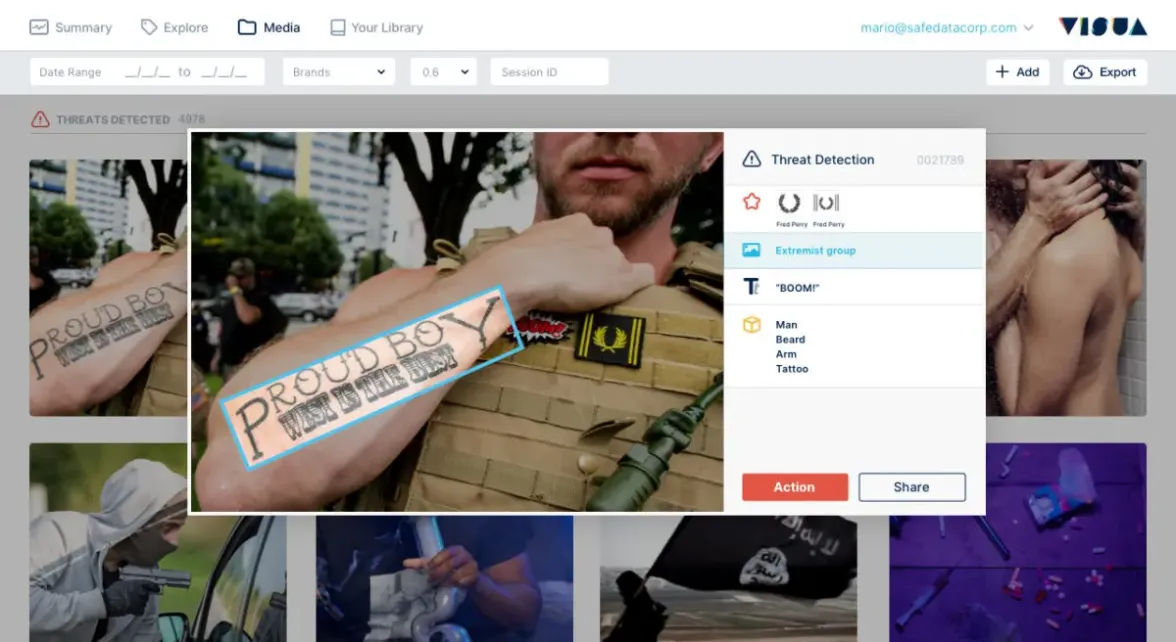

When combined, logo detection, text detection, object detection, and visual search have the ability to deliver accurate detection of visual elements at scale, and even in real-time. So, where humans get tired or bored, or simply need to go home to families; or where they get burnt out from reviewing disturbing content, computer vision just keeps working, and at a faster rate than humans ever could! This combination of computer vision technology provides reliable precision at all times when it comes to analyzing images.

From detecting and transcribing text emblazoned on images or in videos that ordinary text detection would miss to detecting copyrighted and trademarked designs, these technologies can perform a number of tasks. They can enhance content moderation algorithms for a wide range of use cases.

Social Media Platforms

Social media platforms are unfortunate breeding grounds for potentially harmful and offensive content. It can be difficult to moderate this content because much of it is visual i.e. images and video. In recent years, the trauma experienced by content moderators from viewing hours of graphic and upsetting content has become a topic of concern and, indeed, discussion.

Computer vision helps to address both the challenge of catching potentially harmful visual content and the issue of employees experiencing mental health difficulties as a result of reviewing so much distressing content. Symbols, body parts, specific objects such as weapons and offensive text can all be detected and immediately blocked or, for edge cases, referred to the moderation team for manual review.

Live Streaming Moderation

Live stream moderation is notoriously difficult from the viewpoint of the service provider. Due to its real-time nature, it is often deemed impossible to moderate and it is left to the users to find a trusted person to moderate their streams. Computer vision, however, enables the development of a library of images and words that will cause the moderation system to flag and remove the content and user from the live stream. It’s also possible for the service provider to establish a hierarchy of automatic moderation, from warning the user to immediately ban them. It is often a case of having the technology to block an infringing user within seconds that will deter them from trying again – and so keep the platform safer in the long run.

Trust and Safety Compliance

There are a number of cases in which user-submitted media might contravene trust and safety rules or perhaps even infringe on copyright or trademark laws. This is something we have seen in the media a lot over the past couple of years, with social marketplaces and print-on-demand companies coming under fire for stock prints with far-right extremist emblems and racist messaging.

Visual-AI protects platforms like this by flagging any and all possible violations, from a misappropriated Disney logo to markings associated with terrorist organizations. This means offending content can be stopped from ever being made public, or orders can simply be rejected. Reducing overheads of contacting users and making refunds.

Gaming Platforms

Gaming platforms are often in the news for banning members for misconduct. This could be for breaking gameplay rules or sending messages with words that trigger the platform’s content moderation system.

Their rules and regulations tend to be more stringent than most other platforms due to a typically lower age demographic. Many platforms allow chats in which visual media can be shared, which requires constant moderation. There is also the challenge of monitoring and analyzing custom skins and characters which are created and used in games. This might contain anything from brand information to NSFW content and hate speech. Visual-AI can detect and flag all forms of visual content to flag or block on the system.

Metaverse

The metaverse is a massively scaled network of virtual, fictional worlds that can be experienced by an unlimited number of users. An often-cited example of this is Second Life.

Although metaverse platforms have been around for more than 20 years, it is expected that they will experience rapid growth in the next decade. That growth could easily be stunted if these platforms fail to manage the inevitable content abuse that social platforms struggle with.

Metaverse visitors have ample opportunity to expose fellow users to inappropriate visual content. It’s the platform operators’ responsibility to ensure that all users are protected, and the only way to absolute guarantee that is by deploying Visual-AI technology to monitor the virtual environment.

Messaging Apps

Most messaging apps strive to make communication safe while also preserving freedom of speech. Others, such as messaging apps for workplaces, schools, and so on, wish to ensure that specific types of content are never shared on the app. Unfortunately, the apps are often used to share highly inappropriate visual content, which once again, can be difficult to monitor. Computer vision affords these platforms the ability to process visual media in real-time to either block the content or flag to the recipient, sender, or moderators that it may be inappropriate.

As the online world becomes not only increasingly visual but increasingly immersive, it’s important for the relevant platforms to stay abreast of the technologies that will help them to maintain the safety of their users and the integrity of their business. There is no doubt that computer vision plays an important role in this.

VISUA offers ‘No-Code’ and API access to our content moderation technology. For more, visit our visual content moderation page.

Book A DemoRELATED

What Is Visual Content Moderation And Why Is It Important? Posted in: Content Moderation - Reading Time: 7 minutesTLDR: Content moderation is not new and dates back hundreds of years to the world of print and early communications, such as […]

Content Moderation and Computer Vision Explained Posted in: Content Moderation - VISUA marketing director Franco De Bonis explains how content moderation and computer vision work together in a variety of situations.

The European Digital Services Act – How it Affects you Posted in: Content Moderation, Featured - Reading Time: 6 minutesTLDR: The European Digital Services Act has been ratified into law by the European Union and will have wide-ranging implications for companies […]

VISUA & Vision Insights Partner To Usher In New Era In Sports Sponsorship Intelligence

Reading Time: 4 minutesExclusive partnership sees Vision Insights integrate VISUA’s Sports Sponsorship Monitoring Computer Vision Suite into its new Decoder…

Featured Sponsorship Monitoring Technology VISUA NewsEight Types of Content Intermediary Web Companies Need to Detect & Block to be Compliant with the European Digital Services Act

Reading Time: 7 minutesThe European Digital Services Act is a groundbreaking piece of legislation that aims to modernise the regulation…

Brand Protection Content Moderation Counterfeit Detection Trademark ProtectionEight Types of Content that Marketplaces & Ecommerce Sites Need to Block to be Compliant with the European Digital Services Act

Reading Time: 6 minutesThe European Digital Services Act is new legislation which aims to modernise the regulation of online businesses…

Brand Protection Content Moderation Counterfeit Detection Trademark Protection