Object Detection Helps in Visual Content Moderation

Zooming in on object detection for content moderation

Object detection has the potential to be one of the most powerful tools that companies across a number of industries can have in their armoury when it comes to protecting users, preventing reputational damage and avoiding litigation.

What is object detection?

Object detection is a computer vision technology that identifies objects in images and videos for a defined purpose. It provides platforms with the ability to detect and classify a wide range of common or specified objects. This ranges from top-level objects such as “Mammal” to specific subtypes such as “Dog > Labrador”.

Object detection For Content Moderation

Object detection can be applied to any number of use cases. From social media to print-on-demand, messaging apps, live-streaming, gaming platforms and even the metaverse, it has an important role to play when it comes to content moderation.

Because it can also operate in real-time, object detection has the power to stop offensive imagery from reaching the end-user as soon as it’s shared. Here, we’ll take a look at some of the ways object detection can help to protect across these use-cases.

1. Protecting users and employees from seeing disturbing content

Social media, video sharing platforms, messaging apps and some gaming platforms are often utilised by malicious people to send or post incredibly disturbing content.

It is widely reported that content moderators of social media and video sharing platforms in particular often experience symptoms of PTSD, depression and chronic stress as a result of the disturbing images they see every day in their jobs. With computer vision and object detection there really is no need for these workers to experience so much distress while simply trying to earn a living.

Object detection can analyze videos and images at machine speed to detect in real-time the presence of any red-flag objects such as knives or guns, drug paraphernalia, sex-related objects, as well as particular body parts. With this technology, not only are users protected from seeing things that could be potentially scarring but content moderation teams are protected too.

2. Legislation compliance

With news of the European Digital Services Act breaking in mid-2022 many platform operators are starting to think about ways they can remain compliant with the various regulations under the act.

The act will modernize e-Commerce in relation to the promotion and sale of illegal content, transparent advertising and disinformation online. The goal is to make the internet safer for citizens and visitors to the EU.

From social media and e-Commerce platforms to marketplaces and even domain hosting and cloud providers this is a real turning point in how content moderation and the protection of platform users is thought about and, crucially, acted upon. The penalty for non-compliance of the legislation will be up to 6% of yearly turnover. Although ratified in Europe, it is believed that other countries will follow suit with similar legislation, so companies affected by the act should really consider putting protection measures in place as soon as possible; certainly before the deadline of January 2024.

Computer vision and object detection will be crucial when it comes to being compliant with the legislation. With object detection giving moderation systems the ability to spot, flag and take appropriate action in real-time, platforms that implement it will be in a more secure place when it comes to this and similar acts.

3. Monitoring misinformation

Misinformation has been a particularly big problem on social media over the last three years during elections and moments of major societal upheaval, such as the global Covid-19 pandemic, Brexit and abortion debates. Although many platforms are doing a lot to stop the spread of ‘fake news’, there is plenty of room for improvement. And considering that misinformation falls under the aforementioned European Digital Services Act it’s something these platforms need to address.

Object detection can help to flag objects commonly associated with these issues, such as images of fetuses and babies as well as vaccine needles. It can also look for inflammatory content such as a hangman’s noose, guns, etc.

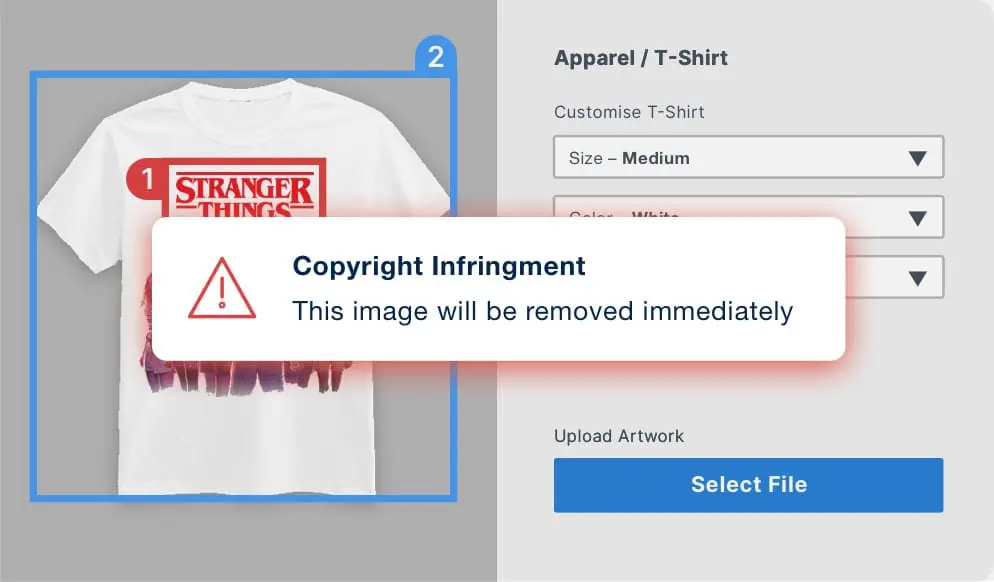

4. Copyright and trademark compliance

Content moderation is not just about preventing the sharing of certain images and videos, it is also relevant in the context of copyright and trademark protection. Online marketplaces and print-on-demand services need to be in a safe position when it comes to protecting themselves from litigation.

A common industry misconception is that terms and conditions, which request marketplace sellers to avoid unauthorized use of protected imagery, designs and logos, put the onus on the seller to be compliant and absolve the platform from liability. However, it has been shown on many occasions that a marketplace or print-to-demand company that allows counterfeit or copyright-infinged goods and designs to be promoted through its site, will be the one to be held liable.

While logo detection and mark detection helps companies to locate and flag specific known designs or characters, object detection can be invaluable to search for infringing products and product designs.

5. Preventing the publication of racist and otherwise offensive material

Social media, messaging apps, online marketplaces and print-on-demand companies are particularly at risk when it comes to the sharing and publication of racist, homophobic, transphobic and otherwise offensive material.

While it’s always been an issue, with an increase in the number of platforms available and the continuing rise of the political far-right it is certainly one of growing concerns for both users and platform operators.

We’ve spoken before about how print-on-demand and marketplaces have found themselves in hot water due to creators using their platforms to sell anti-Semitic and racist designs. Meanwhile, social media platforms like Twitter are being heavily utilized by right wing activists, not to mention individual cases of bullying and harassment on social and messaging apps using images and videos.

Object detection primes content moderation systems to analyze images for items associated with racism, and even scan for concurrently appearing objects that might suggest violence.

As some images or videos using such imagery may be used to highlight problematic objects and items How the moderation system behaves depends entirely on the paradigms and rules put in place by the platform operators. It can flag the content to the user sharing it to remind them of the consequences of posting such content, it can block the content, and indeed the user immediately or flag the content with the moderation team to manually examine it.

Want to talk about object detection for your platform?

At VISUA, we know that every case is unique and discussing how this kind of technology will work within your organization is imperative to make sure it’s the right fit for your needs. Get in touch with us via the form below and we can arrange a time to discuss your project in detail.

Book A DemoRELATED

What Is Visual Content Moderation And Why Is It Important? Posted in: Content Moderation - Reading Time: 7 minutesTLDR: Content moderation is not new and dates back hundreds of years to the world of print and early communications, such as […]

Content Moderation and Computer Vision Explained Posted in: Content Moderation - VISUA marketing director Franco De Bonis explains how content moderation and computer vision work together in a variety of situations.

The European Digital Services Act – How it Affects you Posted in: Content Moderation, Featured - Reading Time: 6 minutesTLDR: The European Digital Services Act has been ratified into law by the European Union and will have wide-ranging implications for companies […]

Eight Types of Content Intermediary Web Companies Need to Detect & Block to be Compliant with the European Digital Services Act

Reading Time: 7 minutesThe European Digital Services Act is a groundbreaking piece of legislation that aims to modernise the regulation…

Brand Protection Content Moderation Counterfeit Detection Trademark ProtectionEight Types of Content that Marketplaces & Ecommerce Sites Need to Block to be Compliant with the European Digital Services Act

Reading Time: 6 minutesThe European Digital Services Act is new legislation which aims to modernise the regulation of online businesses…

Brand Protection Content Moderation Counterfeit Detection Trademark ProtectionInfographic: 8 Types of Content Intermediary Web Companies Need to Detect & Block

Reading Time: < 1 minuteThe EU’s new Digital Services Act will, for the first time, hold online intermediary companies accountable…

Brand Protection Content Moderation Counterfeit Detection Trademark Protection