How Logo Detection is Used in Content Moderation

A close look at logo detection for content moderation

When we think of content moderation we think of social media and video hosting sites like YouTube or video sharing platforms like TikTok and how they moderate content to ensure that unsafe, offensive or otherwise illicit content is not being fed through to their users. But it equally applies to print-on-demand businesses, social commerce platforms or any kind of user-generated content platforms.

These businesses face their own challenges when it comes to content moderation, and logo detection plays such an important role in helping them remain compliant and to protect their reputation.

What is logo detection?

Logo and mark detection is where it all started for VISUA. It is a fantastic piece of computer vision technology that enables the training of an AI to recognise logos, product and industry marks, as well as popular characters and other unique design elements in any visual media.

Logo detection for Content Moderation

As highlighted above, logo detection aids content moderation in particular industries. While it definitely has its place in social media and video hosting, its major value becomes apparent in industries that are at huge risk of copyright litigation and reputation damage from hosting or selling items that contravene trademark laws or carry harmful and offensive emblems or slogans on them.

It is often incorrectly assumed that on websites where content is largely user-generated, the user bears the responsibility for any copyright infringement. However, the opposite is true; the onus lies with the business hosting and/or facilitating the sale of items carrying the offending content.

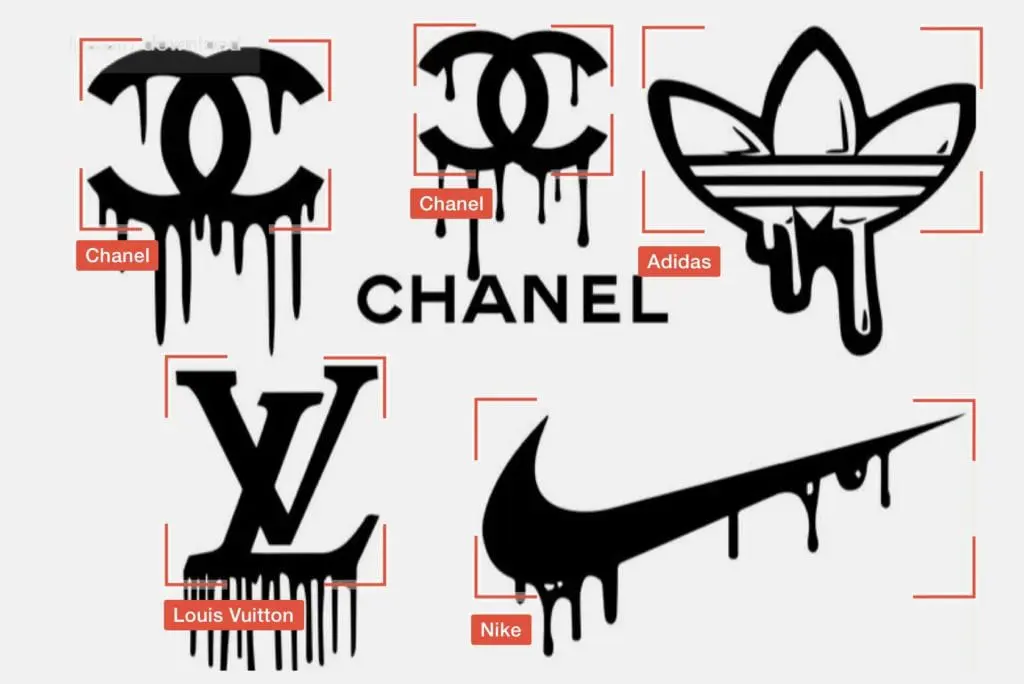

People often underestimate the complexity involved in detecting logos, but fail to consider how AI works. The more data points there are to work with, the easier it is to predict a model or derive an outcome. This is why things like facial recognition are, comparatively, much easier than logo detection. A face has hundreds of data points to work with, which is why it’s relatively easy for AI to detect a specific person’s face in a crowd. But now consider a very popular logo, like the Nike swoosh. In essence, it’s a black tick. So, accurately identifying this in images or videos to a high degree of accuracy is much more complex.

There is also the question of fraudsters making subtle modifications to logos, such as the below examples, where we see the original logos on the left and modified versions on the right.

A fit-for-purpose logo detection system must be able to recognise not only close matches but also allow you to easily and quickly add major derivatives to a logo library.

So, let’s take a look at where logo detection might be used in the context of content moderation.

1. Print-On-Demand

Print-On-Demand businesses like Vista Print and Gearlaunch face the same challenges as social commerce platforms. With users granted the ability to upload their own designs, they are wide open for litigation, and it is widely reported that including a copyright disclaimer is not enough.

Once again, in this situation, logo and mark detection works by comparing uploaded graphics with logos and marks in a library, stopping the publication of offending items or alerting the content moderation team to their existence, as per the platform’s requirements.

This will prevent any repeats of the Harley Davidson Vs Sunfrog case of 2017 in which the print-on-demand business was sued for millions, setting a precedent for further litigation against companies similar to sunfrog.

2. Social Commerce/Ecommerce

Social commerce websites like Etsy and ecommerce functions on popular apps like Instagram and Pinterest are primed for copyright litigation with people uploading their own products and designs that often carry brand logos or imagery and likenesses from popular movies and TV shows.

They are also at high risk of hosting products with hateful and offensive slogans, or indeed illicit and counterfeit products as well as controlled substances. Not only can this lead to legal problems but it can cause serious reputational damage. In 2021, Etsy was subjected to outcries for boycott when it was discovered that many of the racist t-shirts worn by Capitol Hill protestors were purchased on their platform.

Logo and mark detection can help prevent these things from happening by monitoring product uploads and comparing the imagery at machine speed to a library of marks and logos. So, if someone is trying to sell knock-off products with Nike logos, or with an emblem known to represent a far-right extremist organization, they will be blocked before they go live on the platform. This will free up the moderation team to only have to manually moderate less obvious product uploads, resulting in a much more effective system overall.

3. Gaming platforms and online games

An industry you may not think of when it comes to copyright infringement or harmful content, the gaming industry is in fact increasingly under the microscope when it comes to these things.

Many games allow users to upload their own content and designs to worlds or characters they have created. Some even allow users to sell these designs within the platform in the form of skins, outfits and playable characters. Naturally, this brings up a number of challenges in relation to content moderation in relation to copyright infringement and the publication of offensive material.

This issue was raised on a Subreddit in which someone expressed their anger at discovering a group selling Nazi uniforms, some, including the swastika on the popular online game Roblox. Some Reddit users said that this was not even the worst thing they had seen on the platform, with one saying they felt that reporting things to Roblox clearly wasn’t having an effect. This begs the question; why are these designs even making it onto the platform?

Logo and Mark Detection eradicates the probability of such content making it onto the platform significantly.

2. NFT Marketplaces

NFT Marketplaces are certainly the newest platform to feature on this list and their popularity has skyrocketed in recent times. With NFTs being purely user-generated, you can see how their hosts could run into trouble.

In an article for Fortune, intellectual property and complex commercial litigation attorney Jonathon Schmalfeld warns that copyright infringement lawsuits could “crash the NFT party” and render it hopeless. He advises users to “play it safe” and not engage with any NFTs featuring brands or known characters that look like they have not been minted by the original creators. Playing it safe might be great advice for users, but places that sell NFTs need to be proactive by putting logo and mark detection in place in order to prevent any unauthorised minting and sales of NFTs with trademark and copyright protected imagery. This will prevent any future lawsuits and ensure that no bridges are burned between your platform and potential brand partners.

Want To Talk?

VISUA is the leader in logo detection with real-time capabilities. If you wish to explore the inclusion of visual search into your moderation tech stack, get in touch by filling out the form below. To learn more about content moderation, visit our dedicated visual content moderation page.

Book A DemoRELATED

What Is Visual Content Moderation And Why Is It Important? Posted in: Content Moderation - Reading Time: 7 minutesTLDR: Content moderation is not new and dates back hundreds of years to the world of print and early communications, such as […]

Content Moderation and Computer Vision Explained Posted in: Content Moderation - VISUA marketing director Franco De Bonis explains how content moderation and computer vision work together in a variety of situations.

The European Digital Services Act – How it Affects you Posted in: Content Moderation, Featured - Reading Time: 6 minutesTLDR: The European Digital Services Act has been ratified into law by the European Union and will have wide-ranging implications for companies […]

Eight Types of Content Intermediary Web Companies Need to Detect & Block to be Compliant with the European Digital Services Act

Reading Time: 7 minutesThe European Digital Services Act is a groundbreaking piece of legislation that aims to modernise the regulation…

Brand Protection Content Moderation Counterfeit Detection Trademark ProtectionEight Types of Content that Marketplaces & Ecommerce Sites Need to Block to be Compliant with the European Digital Services Act

Reading Time: 6 minutesThe European Digital Services Act is new legislation which aims to modernise the regulation of online businesses…

Brand Protection Content Moderation Counterfeit Detection Trademark ProtectionInfographic: 8 Types of Content Intermediary Web Companies Need to Detect & Block

Reading Time: < 1 minuteThe EU’s new Digital Services Act will, for the first time, hold online intermediary companies accountable…

Brand Protection Content Moderation Counterfeit Detection Trademark Protection